AI Adoption- Why Organizations Must Govern for Resilient, Assured, and Accountable Outcomes

Rick Lemieux – Co-Founder and Chief Product Officer of the DVMS Institute

Introduction: AI as a Driver of Business Model Transformation

Artificial intelligence (AI) is rapidly transforming how enterprises create value, compete, and operate. Organizations are increasingly embedding AI into core business processes, customer engagement models, decision-making systems, and digital products. Rather than merely automating tasks, AI is reshaping entire business models—enabling predictive services, data-driven platforms, autonomous operations, and new forms of digital value creation.

While these opportunities are immense, the use of AI at the business model level introduces significant risks and complexities. AI systems can influence strategic decisions, automate critical operations, and impact stakeholders on scale. Without effective governance, enterprises risk unreliable outcomes, regulatory violations, reputational damage, and loss of trust.

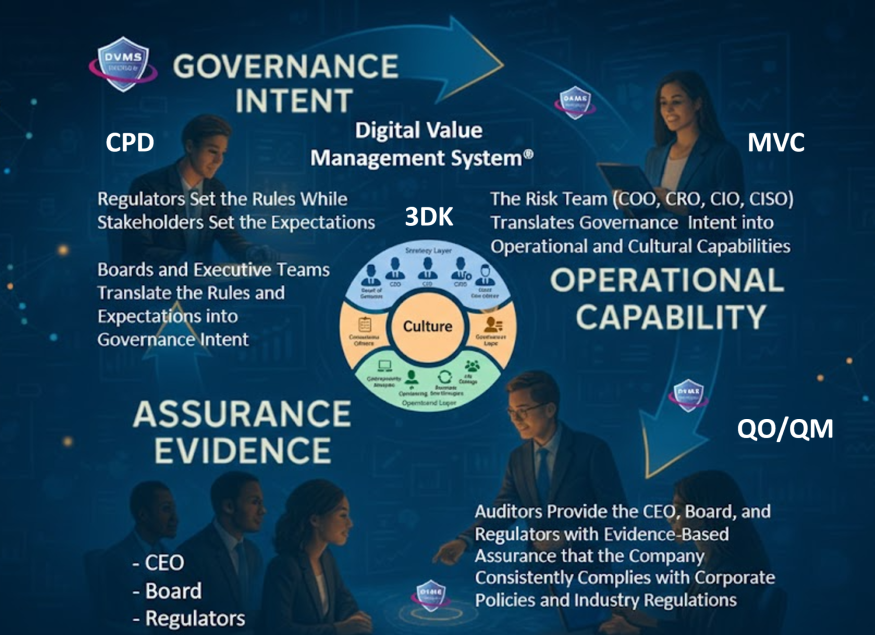

Therefore, organizations transforming their business models with AI must establish governance mechanisms that ensure outcomes are resilient, assured, and accountable. Governance provides the structures, policies, and oversight to responsibly manage AI-driven operations while enabling innovation and sustainable value creation.

The Growing Complexity of AI-Enabled Enterprises

AI-driven enterprises operate within highly complex digital ecosystems. AI systems rely on vast datasets, advanced algorithms, cloud infrastructures, and interconnected digital services. These components interact dynamically with human decision-makers, business processes, and external partners. As AI becomes embedded in business models, such as predictive maintenance services, algorithmic pricing, automated supply chains, or AI-driven customer experiences, the potential impact of system failures or biased outcomes increases dramatically.

This complexity makes it difficult for organizations to fully understand how AI systems influence business decisions and operational performance. AI models may evolve over time through machine learning, making outcomes less predictable than traditional software systems. Additionally, AI-driven services often depend on third-party data sources, APIs, and platform ecosystems, increasing the enterprise’s exposure to risk.

Governance is therefore essential to manage this complexity. Effective governance ensures that organizations maintain visibility into how AI systems operate, how decisions are made, and how risks are mitigated. By establishing clear policies, roles, and accountability structures, governance enables enterprises to harness AI’s transformative potential while maintaining operational control and transparency.

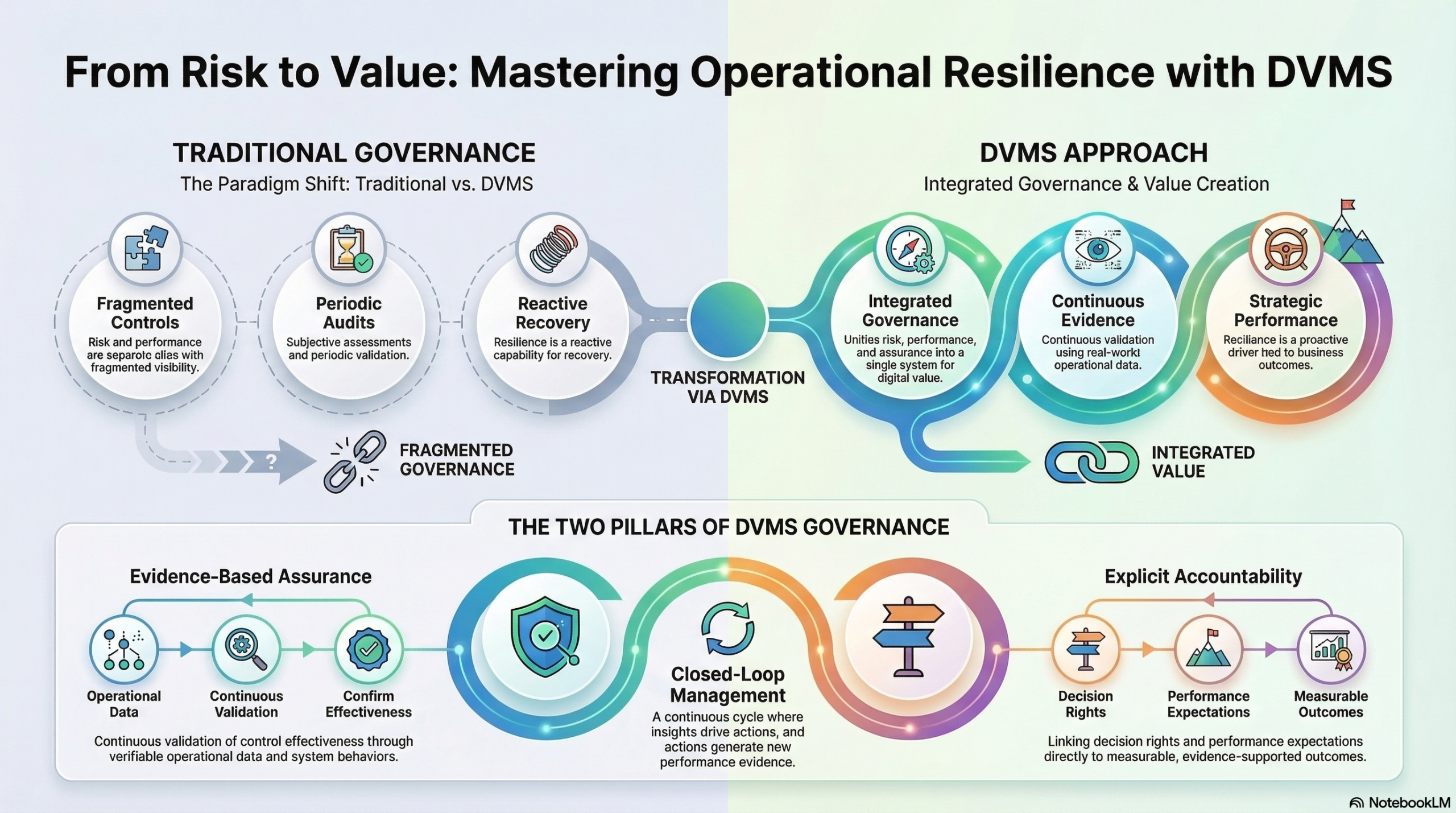

Ensuring Operational Resilience in AI-Driven Business Models

Operational resilience refers to an organization’s ability to continue delivering critical services despite disruptions, failures, or unexpected events. As enterprises increasingly rely on AI for core business functions, resilience becomes a key governance concern. AI systems may fail due to data quality issues, model drift, cybersecurity threats, infrastructure outages, or unintended algorithmic behaviors. When AI systems support essential services, such as financial transactions, healthcare decision support, logistics optimization, or energy grid management, such failures can have significant consequences.

Governance frameworks help organizations design AI-enabled systems that are resilient by design. This includes establishing risk management practices, monitoring mechanisms, and contingency planning processes. Enterprises must implement continuous model validation, performance monitoring, and incident response procedures to ensure that AI systems operate reliably over time.

Resilience governance also requires organizations to understand dependencies within their digital ecosystem. AI models depend on data pipelines, cloud platforms, and external services that may introduce vulnerabilities. By governing these dependencies and establishing redundancy strategies, enterprises can reduce the risk of systemic disruptions.

Ultimately, resilient governance ensures that AI-driven operations can withstand unexpected events while continuing to deliver reliable outcomes to customers and stakeholders.

Assurance: Building Confidence in AI Outcomes

While resilience focuses on the ability to withstand disruptions, assurance focuses on the confidence that AI systems are performing as intended. In AI-enabled enterprises, assurance is critical because many decisions and actions are automated or augmented by algorithms. Stakeholders, including customers, regulators, investors, and employees, must be able to trust that AI-driven outcomes are accurate, fair, and aligned with organizational objectives.

Assurance involves validating that AI systems operate within defined parameters and produce reliable results. This requires robust testing, verification, and performance monitoring practices. Organizations must ensure that data used to train AI models is representative, accurate, and ethically sourced. They must also continuously evaluate model performance to detect bias, drift, or unexpected behaviors.

Governance plays a crucial role in establishing assurance mechanisms. Clear policies and standards must define how AI systems are developed, deployed, and maintained. Independent oversight functions—such as internal audit, risk management, and compliance teams—should evaluate AI systems against defined criteria for reliability, fairness, and regulatory compliance.

Assurance also involves documentation and traceability. Enterprises must maintain records of model development processes, training data sources, decision logic, and performance metrics. This transparency enables organizations to demonstrate compliance with emerging AI regulations and industry standards while building trust with stakeholders.

Accountability in Algorithmic Decision-Making

One of the most critical challenges in AI-driven business models is establishing accountability. AI systems can influence decisions that affect customers, employees, and society at large. For example, algorithms may determine credit approvals, insurance premiums, hiring recommendations, or supply chain prioritization. When outcomes are automated, it can become difficult to determine who is responsible for the decisions made by these systems.

Governance frameworks address this challenge by clearly defining roles and responsibilities related to AI oversight. Organizations must establish accountability for AI outcomes across multiple levels, including executive leadership, technology teams, risk management functions, and operational units. Senior leadership must ensure that AI initiatives align with organizational strategy, ethical principles, and regulatory requirements.

Accountability also requires transparency in algorithmic decision-making. Enterprises should implement explainability mechanisms that allow stakeholders to understand how AI systems reach their conclusions. This is particularly important in regulated industries where decisions must be justified and audited.

Furthermore, governance should establish escalation processes for addressing AI-related incidents or ethical concerns. When AI systems produce unintended consequences, such as biased outcomes or operational disruption, organizations must have clear mechanisms for investigation, remediation, and corrective action.

Governance as a Strategic Enabler for AI Transformation

Some organizations view governance as a constraint that slows innovation. However, in the context of AI-driven transformation, governance is a strategic enabler. By establishing clear rules, accountability structures, and assurance mechanisms, governance enables organizations to scale AI adoption safely and sustainably.

Enterprises that lack governance often encounter barriers to AI deployment. Concerns about risk, compliance, and ethical implications can delay initiatives or create internal resistance. Conversely, organizations with strong governance frameworks can confidently deploy AI solutions because they have mechanisms in place to manage potential risks.

Governance also supports alignment between technology initiatives and business objectives. AI investments should not be isolated technical experiments but integrated components of enterprise strategy. Governance structures ensure that AI initiatives deliver measurable business value while supporting long-term organizational goals.

Additionally, effective governance fosters stakeholder trust. Customers, regulators, and partners are more likely to engage with organizations that demonstrate responsible AI practices. Trust becomes a competitive advantage in markets where digital services increasingly rely on automated decision-making.

Integrating Governance with Enterprise Frameworks

To effectively govern AI transformation, enterprises should integrate AI governance into broader enterprise governance frameworks. This includes aligning AI governance with risk management, cybersecurity, compliance, and digital governance structures. Frameworks such as enterprise architecture governance, cybersecurity frameworks, and digital value management approaches can provide structured mechanisms for overseeing AI-enabled capabilities.

Integrated governance ensures that AI initiatives are managed in the broader context of enterprise performance, resilience, and risk management. It enables organizations to monitor AI-driven capabilities within their overall digital ecosystem, ensuring consistent oversight and coordination across departments.

By embedding AI governance within enterprise frameworks, organizations can establish a unified approach to managing digital transformation. This holistic perspective is essential for maintaining control over increasingly complex and interconnected digital operations.

Conclusion: Governing AI for Sustainable Digital Value

AI has the potential to fundamentally reshape enterprise business models, enabling new forms of digital value creation and competitive advantage. However, the transformative power of AI also introduces significant risks related to reliability, transparency, and responsibility. Enterprises that fail to govern AI effectively may experience operational disruptions, regulatory penalties, and erosion of stakeholder trust.

Governance provides the foundation for managing these challenges. By establishing structures that promote resilience, organizations can ensure that AI-enabled operations remain reliable even in the face of disruptions. Through assurance, enterprises can build confidence that AI systems perform as intended and align with ethical and regulatory expectations. By enforcing accountability, organizations can ensure that humans remain responsible for the outcomes produced by automated systems.

Ultimately, enterprises that integrate governance into their AI transformation strategies will be better positioned to realize the full potential of artificial intelligence. Governance does not hinder innovation; rather, it enables organizations to innovate responsibly, scale AI capabilities confidently, and deliver sustainable digital value in an increasingly complex and automated world.

About the Author

Rick Lemieux

Co-Founder and Chief Product Officer of the DVMS Institute

Rick has 40+ years of passion and experience creating solutions to give organizations a competitive edge in their service markets. In 2015, Rick was identified as one of the top five IT Entrepreneurs in the State of Rhode Island by the TECH 10 awards for developing innovative training and mentoring solutions for boards, senior executives, and operational stakeholders.

Digital Value Management System® is a registered trademark of the DVMS Institute LLC.

® DVMS Institute 2026 All Rights Reserved